Physical Address

304 North Cardinal St.

Dorchester Center, MA 02124

Physical Address

304 North Cardinal St.

Dorchester Center, MA 02124

Stay notified with free updates

Simply sign up Artificial intellect MEFT Digest – Directly delivered to your inbox.

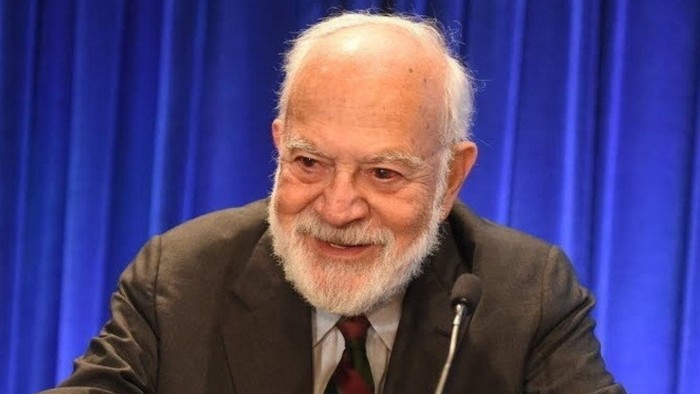

According to philosopher Harry Frankfurt, false truth is not the greatest enemy of truth. Bullshit is worse.

As he explained His classic composition Bullshit (1986), a liar and the true Teller is playing the same game, in the opposite direction. Everyone responds to the truth as they understand them and either accept or reject the authority of the truth. However, a bullshiter completely ignored these claims. “The liar does not reject the authority of the truth as he does and does not oppose himself. He does not pay attention at all. Bullshit is the greater enemy of truth than the bullshit lies.” This national person wants to explain to others regardless of the truth.

Sadly, Frankfurt died in 2023 a few months after the ChatzP was released. However, reading his essay in the age of genetic artificial intelligence provokes anxious identity. In many cases, Frankfurt’s essay describes the output of AI-enabled large language models. They are not concerned about the truth because they have no idea. They are not experienced observations by statistical interdependence.

“Their biggest power, but their biggest danger is the ability to listen to almost any matter regardless of true accuracy. In other words, their superpower is the superhuman power to bull their power,” Carl wrote to Bergstrome and Zevin WestThe Professor of two universities at the University of Washington is conducting an online course – Modern period Oracles or Bullshit machines? – Verifying these models. Others have named as the output of the machines BotheetThe

Is still one of the most familiar and worrying Sometimes attractive creativeThe features of LLMS are the “hallucinations” of their truth – or simply creating stuff. Some researchers argue that this is an inherent feature of potential models, it is not a bug that cannot be fixed. However, AI companies improve the quality of the data, their models are trying to solve this problem by subtle-surrender and verification and building on fact-checking systems.

Although they seem to have some ways to consider Anthropological lawyer told a California court this month That their law agency involuntarily submitted an incorrect certificate mentioned by the Cloud of the AI Company. Google’s chatbot as flag to users: “May make mistakes about people including Gemini, so double-chec.” It didn’t stop Google from this week A “AI Mode” rolled in all its main services in the US.

The way these companies are trying to improve their models, including learning reinforcement from human reaction, it has risked itself to introduce bias, distortion and undisclosed values. FT has shown such as, OpenAI, ethnographic, Google, Meta, Jai and DEPSEC AI chatbots describe the qualities of the CEO of their own companies and rivals very differently. Also promoted Mems about Elon Masks’ Grock “White Massacre” As a response to a complete relationship in South Africa. Jai said it had fixed this error, which has blamed it for a “unauthorized change”.

According to Sandra Watchter, Brent Mitelstadt and Chris Russell these national models create a new, even worse sediment of potential losses – or create “negligence speech”, On a paper from Oxford Internet InstituteThe In their view, irresponsible speeches can cause unnecessary, long -term and growing damage. It is like the “invisible bullshit” that makes society thicker, the watchter tells me.

We usually understand their inspiration with at least one politician or selling person. However, the chatbots have no intention and it is not truthful, it is favorable for admiration and busyness. They will discover information without any purpose. They can pollute the basis of humanity in an unreasonable way.

The interesting question is whether AI models can be designed for higher truthfulness. Will the market demand for them? Or model developers should be forced to comply with the values of higher truths, such as advertisers, lawyers and doctors? The watch suggests that time, money and resources will need to develop more truthful models that are designed to preserve current repeats. “It’s like you want to be a plane

Whatever said, generator AI models can still be useful and valuable. Many profitable businesses – and political -career bullshit were built. Properly used, generator AI can be deployed in countless business use. However, it is misleading and dangerous to make these models mistake for true machines.